Teaching Hospital–Based Medical Education Quality Framework

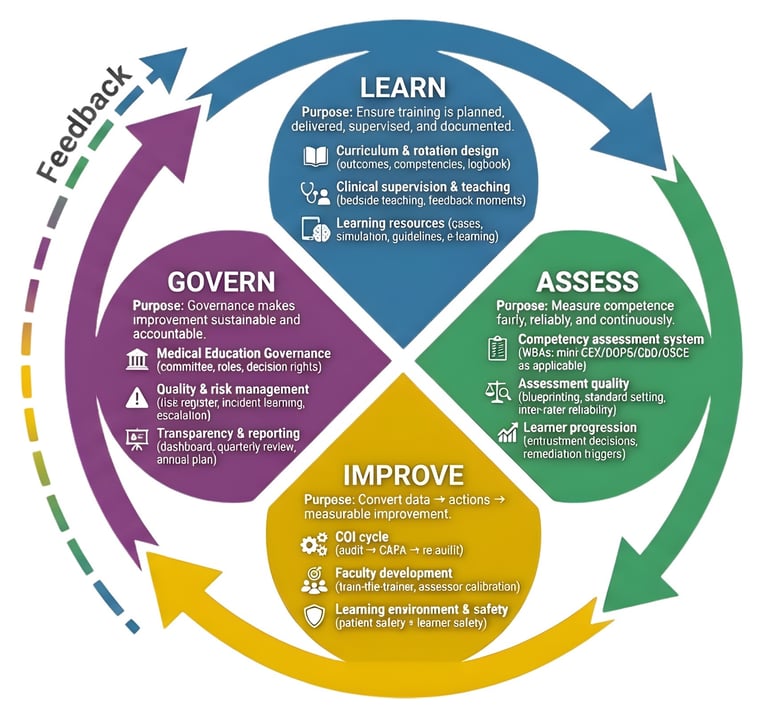

Learn–Assess–Improve–Govern

What it is: A lightweight but rigorous structure that helps teaching hospitals and universities build a measurable medical education system—without over-engineering.

Where it fits: Designed for teaching hospitals and health sciences universities — including Medicine, Dentistry, and Dental Public Health — where clinical training, community placements, prevention programs, and population health initiatives require structured supervision, measurable assessment, continuous improvement, and accountable governance.

Why this framework exists

Common problems this addresses:

“We teach well” but outcomes and progression are unclear

Assessment varies by assessor and department

Feedback exists but does not drive improvement actions

Governance is ad-hoc; decisions are not data-driven

The four pillars

These four pillars connect learning to reliable assessment, continuous improvement, and leadership governance.

LEARN

Curriculum & rotation design (outcomes, competencies, logbook)

Clinical supervision & teaching (bedside teaching, feedback moments)

Learning resources (cases, simulation, guidelines, e-learning)

ASSESS

Competency assessment system (WBAs and OSCE as applicable)

Assessment quality (blueprinting, standard setting, inter-rater reliability)

Learner progression (entrustment decisions, remediation triggers)

IMPROVE

CQI cycle (audit → CAPA → re-audit)

Faculty development (train-the-trainer, assessor calibration)

Learning environment & safety (patient safety + learner safety)

GOVERN

Medical Education Governance (committee, roles, decision rights)

Quality & risk management (risk register, incident learning, escalation)

Transparency & reporting (dashboard, quarterly review, annual plan)

KPI starter set (example list)

Starter KPIs (customizable)

% rotations with defined outcomes + logbook requirements (target ≥ 90%)

Supervision ratio compliance (e.g., % shifts meeting supervision standard)

Faculty teaching engagement (% faculty meeting minimum sessions)

WBA completion rate per trainee per month (minimum threshold defined locally)

Assessor calibration coverage (% assessors calibrated/6 months)

Assessment reliability signal (e.g., moderation concordance %)

CAPA closure on time (% actions closed by due date)

Re-audit pass rate (% issues improved after 60–90 days)

Remediation success rate (% learners improved after support plan)

Quarterly Medical Education Quality Review held (Y/N + attendance rate)

Learner experience score (pulse survey, response rate + score)

Patient safety learning integration (# cases/M&M linked to learning objectives)

Optional Extension KPIs: Community / Dental Public Health (add-on)

% community/outreach sites meeting minimum training criteria (target ≥ 90%)

Prevention pathway adherence rate (target defined locally)

Follow-up/referral completion rate after screening (target defined locally)

Education delivery rate (% eligible encounters documented) (target ≥ 85% or local)

Underserved reach indicator (target defined locally)

Outreach IPC & safety compliance (target ≥ 95% or local)

Adoption pathway (90 days)

90-day adoption (high-level)

Weeks 1–2: baseline scan + gap map

Weeks 3–4: KPI definitions + dashboard skeleton

Weeks 5–8: pilot in 1–2 departments

Weeks 9–12: governance cadence + scale plan

Contact

Building measurable, internationally-aligned medical education quality systems for teaching hospitals, health sciences universities, and dental/community-based programs.

info@medical-edu.com

© 2026. All rights reserved.